As part of our continuous efforts in open source sponsorship, we have recently started working with Julian Gruber (Twitter, GitHub), who is a highly involved open source maintainer, located in Germany.

Julian recently developed a new Op for The Ops Platform called test-npm-dependants, which he'll showcase in this blog post. In comparison to regular Node Package Manager (NPM) scripts, Julian discusses why The Ops Platform is the right platform for the tools he's creating.

Modern software is a network effect

If you are involved in creating software in any kind of modern language, you are probably aware of the wild amount of collaboration happening in the open source world. Hundreds, thousands, or even millions of packages (depending on the language) just waiting for you to build your project upon—for free. Most of these packages are maintained to various degrees by the open source community and they often consume each other in order to provide their functionality to you.

For a creator or maintainer of such a package, this can mean many things, but for your company's IT compliance department, it probably means very different things! No matter your perspective, this is a massive living network of software, and it's up to you to get the best out of it. It's even better if you can use to your benefit what others might judge to be a disadvantage.

Testing NPM dependants

The Op that I recently created comes from an idea that I've wanted to build out for a while, but never found the time or the platform to sensibly build it upon. Both of these obstacles are gone now, and this post will demonstrate why The Ops Platform removed the platform obstacle.

The concept for this Op is simple: Given a software package, run the test suites of the packages depending on your package (the dependants), in order to tell you something about the stability of your package. We use the network effect to our advantage, appreciating the rich interconnectedness of modern software instead of shying away from it.

We don't just run the test suites once though, we run them for two versions of the package you maintain. This approach can answer the following questions:

- If a new version of a package isn't supposed to be a breaking change, is this actually true for all the packages depending on it? Does all the software still work if they upgrade to the new version?

- If the new version of a package is intentionally a breaking change, how much of the ecosystem will actually break?

The first question is especially crucial for maintainers of heavily used packages. Take for example Express.js, a very popular Node.js package with over 11 million weekly downloads (at the time of writing). If you head to their versions tab, you can see that among others there are multiple stable 4.x releases as well as those for an upcoming 5.x release. We're going to use this as the example in this post.

We can now use test-npm-dependants to

- verify that updating to a newer

4.xversion doesn't break anything - check how much of the ecosystem breaks by updating to the new major

5.xversion

Let's go

If you haven't yet set up The Ops CLI on your computer, follow the instructions in Getting Started. Make sure you are also logged in to your account and have Docker up and running.

To run any op, issue:

$ ops run ACCOUNT/NAME

In our case, the command is:

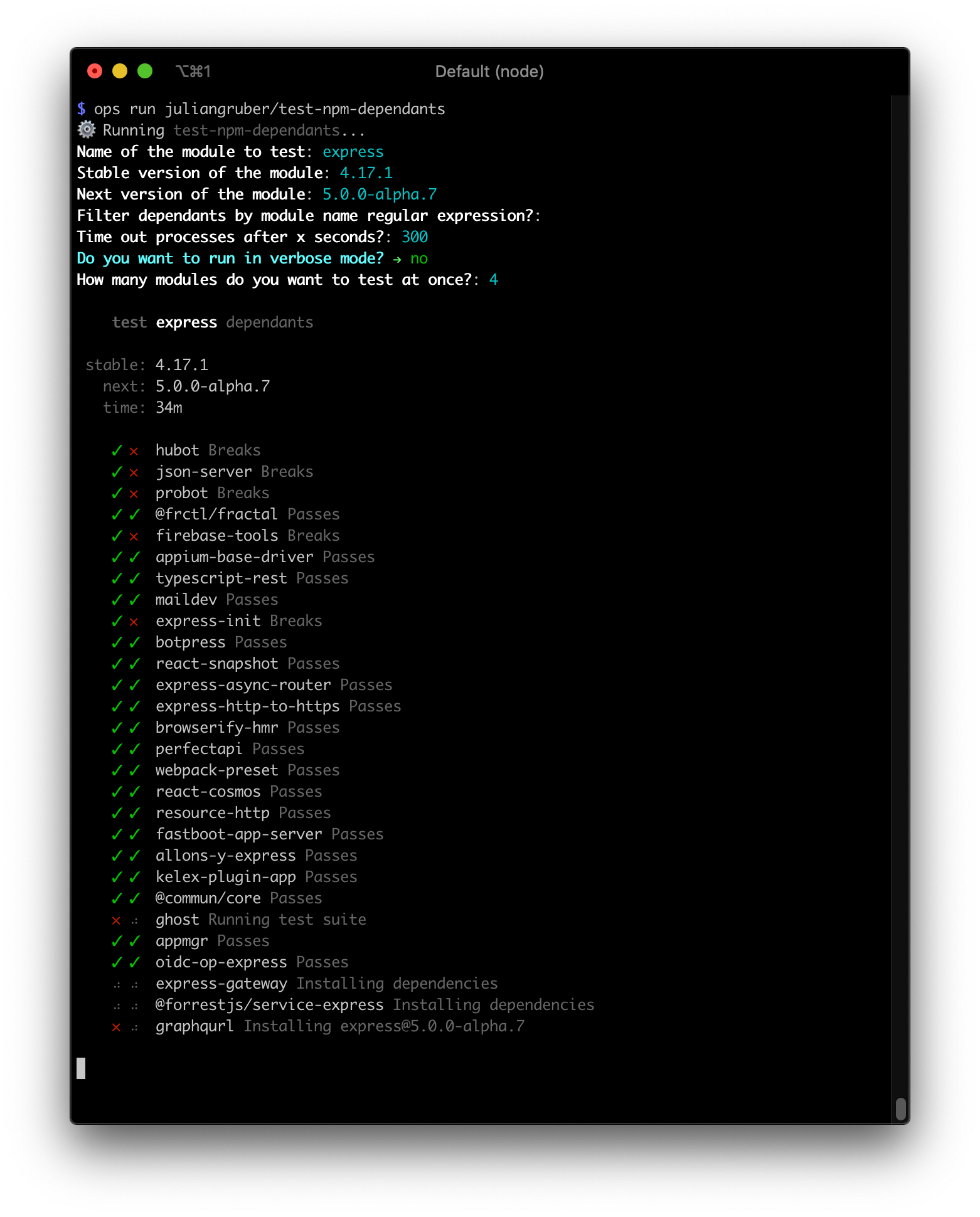

$ ops run juliangruber/test-npm-dependants

As implemented by the open source SDK, you will be prompted for a few arguments, which you can also provide through --flags, so you can also skip the prompts.

Here we're going to test two version pairs:

[email protected]👉[email protected]

A major version bump, so issues are expected. But just how much of the ecosystem would break?[email protected]👉[email protected]

A minor version bump, so only features should have been added, and bugs fixed. Which packages were actually fixed, and did some break by mistake?

Express alpha

Grab a cup of coffee, enter the following arguments into the Op, and watch as it runs one test suite after another, in a Docker container automatically provisioned by The Ops CLI:

[email protected] is a major version bump, so breakage is expected. What is interesting, however, is that most of these packages here still work. They aren't using any of the APIs that were changed. Which is great! As a maintainer, you can optionally check out the code of the modules that broke, and if it's an important module for example, you can provide guidance about how to upgrade to the new version.

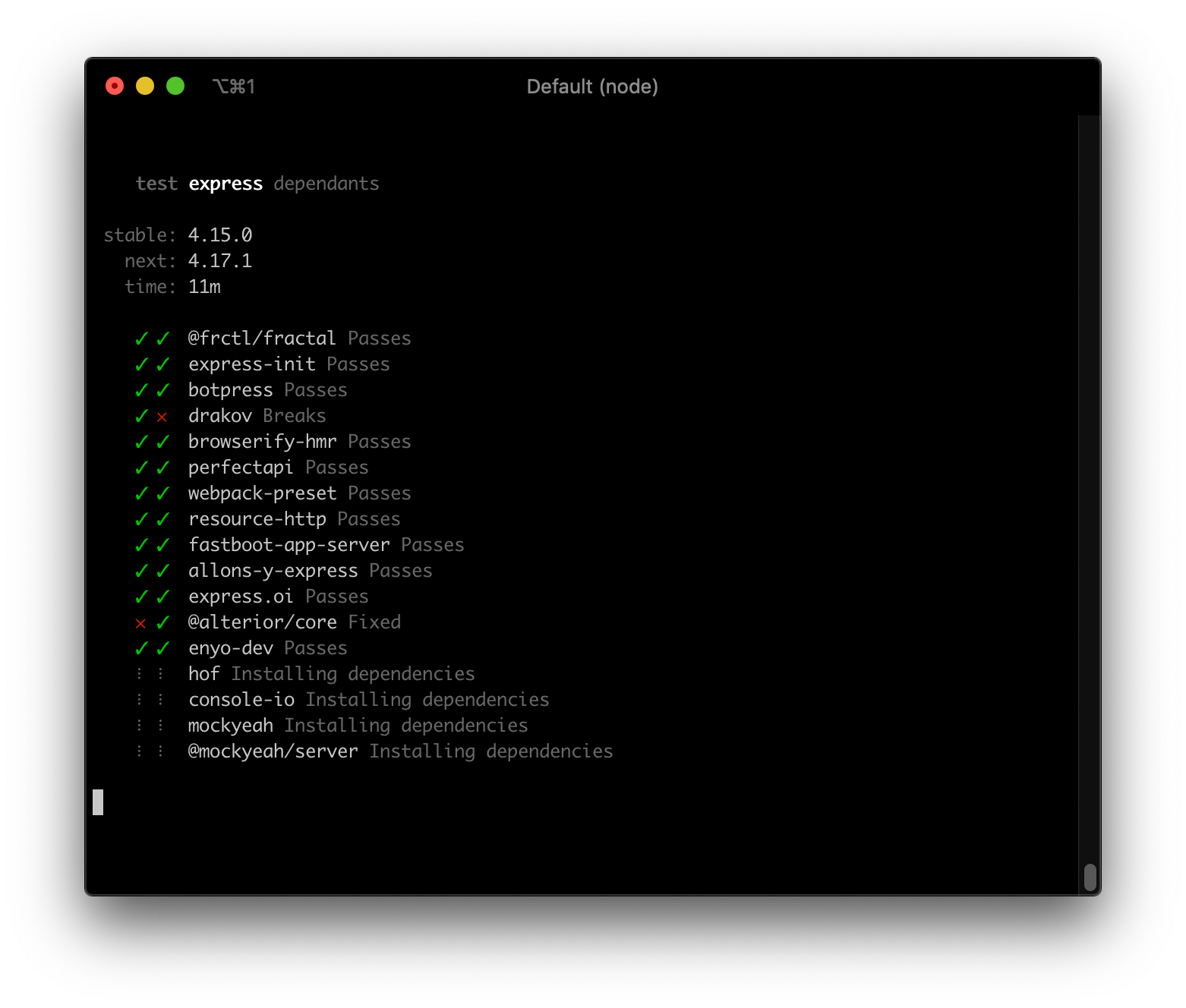

Express stable

The second example is the more conventional one. If we upgrade from a stable to another compatible stable version of express, does everything still work? And surprisingly (and luckily for me writing this post), at least one module was fixed, and at least one module broke!

The module that broke could, for example, have been using an undocumented API or behavior, which is usually not considered a breaking change if it's modified.

These commands are easy to run. Take the time to run them since these kinds of insights can tell you a lot about the ecosystem.

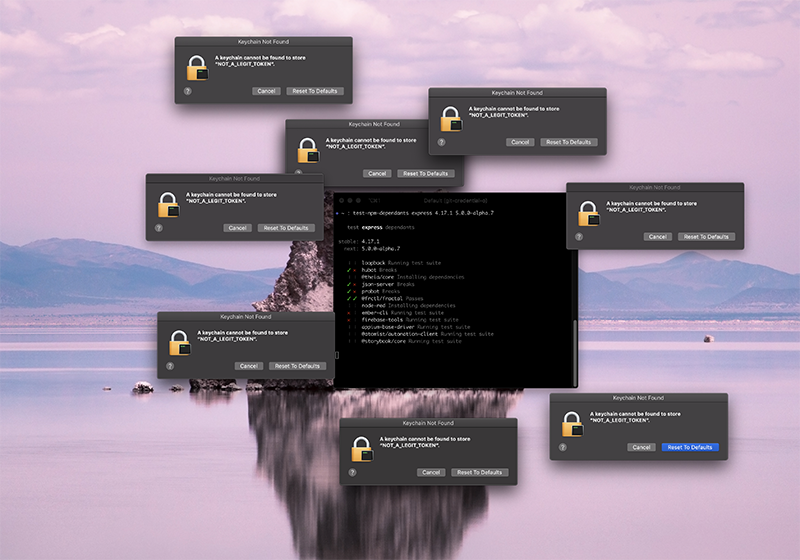

A word on security

Running a bunch of untrusted code on your computer—with access to your files and your network—is clearly dangerous. Most of the time, it works just fine, but sometimes it doesn't. While I was developing this Op, I ran it through npm (not Ops) and I encountered this situation:

One of the test suites was trying to access my personal macOS Keychain, the place where most of my passwords are stored! Another test suite was even reading my GitHub credentials and created a few repositories in my name. It could also have deleted some! This is scary and has to be avoided. Fortunately, there is a solution.

With The Ops Platform, this risk is greatly minimized. Every Op you run will be executed inside a Docker virtual machine. This makes every Op portable and makes it much more difficult to access your system.

I would like to see more CLIs and platforms adopt this containerization approach for running 3rd-party code, as it can save you from a lot of headaches.

Conclusion

We hope you enjoyed reading this article. Go check out juliangruber/test-npm-dependants in The Ops Platform registry!

Also, please follow and support Julian on GitHub and Twitter for more great contributions like this. Thanks for reading!

Comments